Ofsted’s New Inspection Framework: Insights for Employers from an Early Inspection

‘The call’ from Ofsted is one of those moments that holds a special place in the memory of most Further Education (FE) and skills leaders. Receiving that call at 9:30am on the first working day of 2026 certainly made it a memorable start to the year for us at LDN.

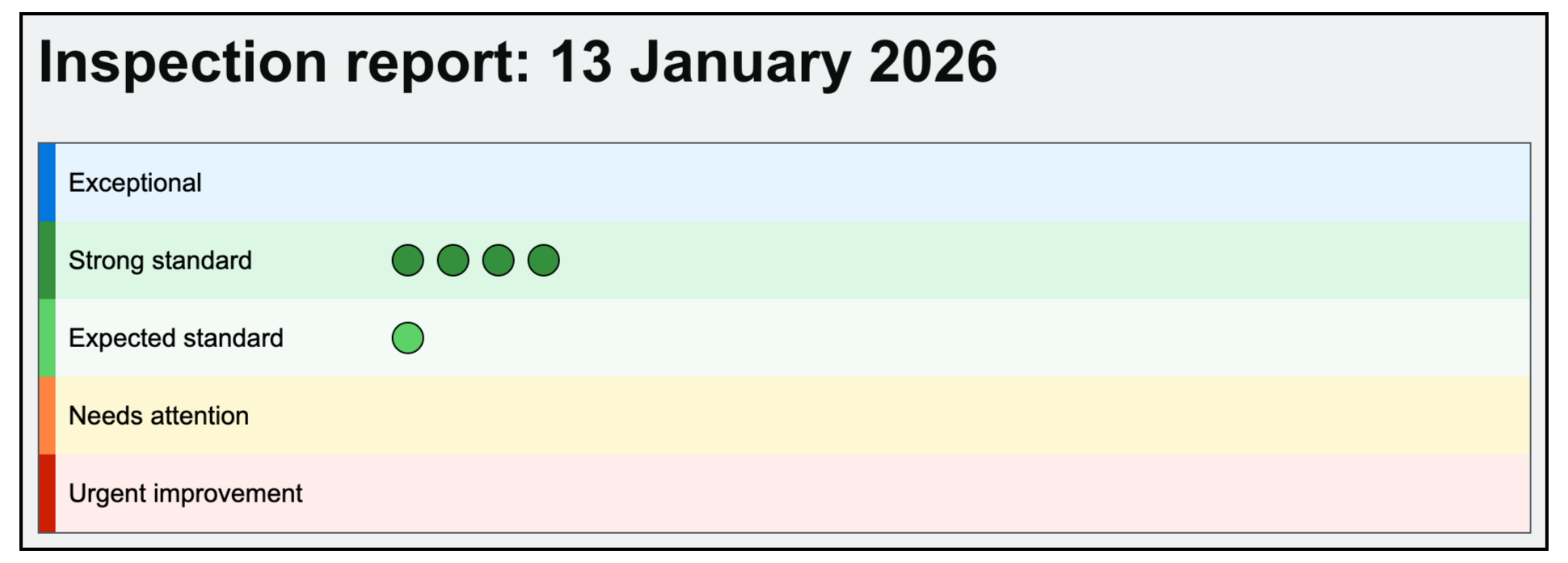

LDN was inspected from 13th to 16th January 2026, with the results published on 19th February. This was our first inspection under Ofsted’s new Further Education and Skills inspection framework, introduced in late 2025, which represents a significant shift in how quality is assessed and reported across the sector.

As one of the first providers to be inspected under the new framework, we have spent time reflecting on what we learned from the experience. Not just about our own provision, but about how apprenticeship leads and employers should now interpret inspection outcomes and assess provider quality.

This blog is intended to help employers, and particularly apprenticeship leads, understand what the new framework is really testing and how it should inform procurement decisions.

A different way of assessing quality

One of the most noticeable changes under the new framework is the removal of single-word judgements. Providers are no longer given a single headline grade intended to summarise everything from leadership to learner experience.

Instead, Ofsted now awards judgements across five evaluation areas: ‘leadership and governance’, ‘curriculum, teaching and training’, ‘participation and development’, ‘achievement’ and ‘inclusion’. Each area is judged separately and the results are presented through a scorecard.

Where a provider delivers more than one type of provision, such as apprenticeships alongside adult skills, the scorecard can include multiple sets of judgements. This is why inspection reports may now look more detailed and, in some cases, more complex than under the previous system.

Judgements are awarded by testing whether a provider meets a defined set of grade descriptors in each evaluation area and for each provision type inspected. There is no averaging or weighting. A provider either meets the required standard in an area, or it does not.

Ofsted refers to this approach as secure fit grading. Secure fit is the mechanism inspectors use to check that the evidence gathered during an inspection consistently supports the grade awarded and that there are no gaps which mean the standard has not been fully met.

To be judged at a particular standard in any evaluation area, every grade descriptor needs to be in place. If even one is missing or only partially evidenced, the standard cannot be confirmed, regardless of how strong the rest of the evidence may be.

For employers and apprenticeship leads, this is a critical point. An inspection grade confirms whether a provider has fully met a defined standard in a specific evaluation area. Secure fit exists to ensure there are no hidden weaknesses behind a grade and that the quality being reported can be relied on in practice.

What grades mean under the new system

Under the new framework, each evaluation area is judged against five outcome levels: Urgent Attention, Needs Improvement, Expected Standard, Strong Standard and Exceptional. Each level is defined by published grade descriptors and judgements are threshold-based.

To be judged ‘Strong’ in any evaluation area, a provider must evidence every Expected Standard descriptor and every Strong Standard descriptor for that area. If inspectors do not see clear evidence that even one Strong descriptor, or part of one descriptor, has been met, the judgement defaults to Expected Standard for the whole area. Similarly, missing an Expected Standard descriptor automatically moves a provider into Needs Improvement.

This creates a deliberately demanding framework. Every inspection starts from the assumption that a provider is operating at Expected Standard, and the burden of proof sits firmly with the provider. Moving beyond Expected Standard to Strong requires consistently high-quality evidence across all descriptors. As our Lead Inspector said to me more than once during the inspection, the Expected Standard is itself a very high bar.

Why similar grades can mask very different quality profiles

One of the clearest insights from our inspection was that two providers can receive the same judgement in an evaluation area while operating at very different levels of maturity and consistency.

One provider may meet almost all of the Strong descriptors but fall short on a small part of one. Another may meet only the Expected Standard descriptors. On the scorecard, the outcome looks the same. Underneath, the quality profile can be materially different.

This is not a flaw in the framework. It is an intentional design choice. The approach is deliberately binary and structured to prevent grade inflation. Headline outcomes confirm that a standard has been met in full. They are not intended to act as a subjective comparison of overall quality.

For employers, this is a critical shift. Inspection outcomes provide baseline assurance, but they do not tell the whole story. Understanding what sits beneath the grade matters more than the grade itself when comparing providers and assessing delivery risk.

We’ll be breaking this down further in an employer webinar on 17th March for anyone who wants a clearer understanding of how to interpret these outcomes.

Inspection and growth

At the time of this inspection, we were supporting around five times as many learners as when we were last inspected in 2021.

That level of growth introduces risk. As organisations scale, maintaining consistency becomes harder and small weaknesses surface more quickly. Our experience reinforced that the new framework is designed to test whether quality is being maintained as scale increases. It does not make allowances for growth.

Secure fit plays an important role here. It tests whether the full set of required descriptors has been met and properly evidenced at the provider’s current scale. Where growth exposes gaps in consistency or learner experience, those gaps prevent the standard from being confirmed.

Inspection, in this context, is a test of whether quality holds together under pressure.

LDN’s outcome in context

Across the five evaluation areas, we were judged Strong in four. In ‘participation and development’, we met the Expected Standard. Inspectors identified that a small proportion of learners were not yet able to confidently articulate their next steps after completing their apprenticeship. This meant we were unable to fully evidence one of the nine Strong descriptors in that area and the judgement defaulted to Expected Standard.

We share this not to challenge the outcome, but to illustrate how the framework operates. Missing part of a single descriptor prevents a Strong judgement, regardless of the strength of evidence elsewhere. Another provider meeting only the minimum Expected Standard descriptors could receive the same outcome. The scorecard would look identical. The underlying picture may not be.

What this means for learners and employers

For learners, our experience is that the framework is deliberately protective. It raises the bar for provider performance and places the burden of proof firmly on providers. As providers grow, learners should not experience variability in quality, and the framework is designed to hold providers to account for that consistency.

For employers and apprenticeship leads, inspection outcomes should be read as a risk and assurance signal, not as a badge of relative quality. Most judgements will sit at Expected Standard. Only by reading the narrative beneath each evaluation area can it be understood whether a provider is just meeting the minimum threshold or operating close to Strong.

Inspection should be the start of a more informed conversation with providers, not the end of it.